When you intervene in a complex system, you have difficult choices to make about where and how to act. We may be fans of impact measurement in the social sector, for example, but what if it ends up driving a kind of “marketization” of the sector that pushes charities toward the biggest bang for their buck? Those choices are almost always underdetermined—you can’t know what will happen if you push here instead of pulling there. But if you’re lucky, you’ll be able to see how the system responds over time and refine your strategies accordingly.

Ten years ago, critics dismissed impact measurement as too difficult, misleading, or simply not important. Today, 75 percent of charities measure some or all of their work, and nearly three-quarters have invested more in measuring results over the last five years. A transformation in the tools that enable nonprofits to measure the impact of what they do has raised the bar significantly, but not all have grasped the opportunities offered by this kind of analysis. At NPC, to achieve our vision of a third sector where impact measurement is the norm, we help charities identify what to measure and which tools to use, as well as how to make sense of that data and communicate its value. We’re also the backbone organization in a collective impact program called Inspiring Impact, which aims to embed good impact measurement practices across the UK social sector by 2022.

Over the years, we’ve tried a range of different approaches at the sector level, with varying degrees of success. And we’re not alone: Others across the globe, including the Social Impact Analysts Association, Charting Impact, and the microfinance field’s Social Performance Task Force, are using similar methods to help organizations improve their effectiveness. But is the system responding to these efforts, and does everyone agree with the direction we’re taking? In a recent Stanford Social Innovation Review post, Caroline Fiennes suggested that we couldn’t reasonably expect charities to produce good quality, robust evidence; we should advise them to monitor their activities but leave evaluation to the experts.

Nonprofits measure results within a system

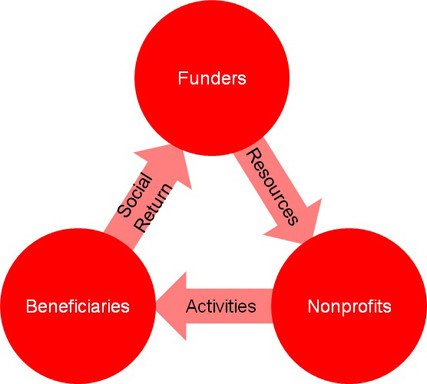

Broadly speaking, we can reduce the system to three sets of actors: funders providing resources, nonprofits delivering services, and beneficiaries receiving products.

Simplistic representation of nonprofit system. (Image courtesy of NPC, 2013)

Simplistic representation of nonprofit system. (Image courtesy of NPC, 2013)

Are you enjoying this article? Read more like this, plus SSIR's full archive of content, when you subscribe.

We know from research carried out in the UK that funder requirements are the primary driver behind an increase in impact measurement among nonprofits—it is cited as more than twice as important as internal leadership. We also know that improved strategy and services are the main benefits that they see as a result; by understanding the impact of their services, they are able to improve what they do.

So herein lies the paradox: Nonprofits measure their results to satisfy funders, but the main reward is a better service, not increased funding.

Stick or carrot?

These findings hint at how we could intervene in the system. Most of us would, I hope, agree that we’re more interested in the carrot (improving services) than the stick (meeting funders’ requirements).

If we want impact measurement to result in improved services and increased impact, then we have to make sure it works for the nonprofit. Only then should we turn to what funders want out of impact measurement.

This is the fundamental principle behind Inspiring Impact—making impact measurement work for nonprofits. This means it’s more a matter of practical knowledge (are we doing better now than last year?) than theoretical proof (can we attribute this change specifically to this intervention?). We’re more interested in performance management and using evidence to improve services, for example, than in randomised control trials.

Inspiring Impact has developed the UK’s first-ever Code of Good Impact Practice for nonprofits, alongside Funder Principles for grantmakers, based on this motivation. Both provide guidance that is accessible and inclusive, aimed at the whole nonprofit sector rather than just those already at the high end of evaluation.

These documents won’t change the world on their own, but we hope they create a rising tide. So many of the forces that influence a nonprofit—funder decisions, government policies, and socioeconomic factors—are subject to rapid and sometimes unpredictable change, so it makes sense to focus on aspects that we can control.

Power to the people

Ultimately, I am much more comfortable with the view that nonprofits should drive their own impact measurement agendas, than a paternalistic view of the world in which only experts carry out evaluations and funders make all the decisions based on evidence they produce. The closer nonprofits are to their beneficiaries, the better able they are to represent them. Good impact measurement will ensure that they remain close, and understand the detail and nuance of their lives. In the end, that’s an approach to raising the bar on impact that helps make us accountable to beneficiaries—the people we’re here to help.

Support SSIR’s coverage of cross-sector solutions to global challenges.

Help us further the reach of innovative ideas. Donate today.

Read more stories by Tris Lumley.