In the competition for grant funding and influencing policy on important issues such as global warming, health care, and education, questions often arise about scientific evidence. We may all agree that we want to base our policies and programs on good evidence, but questions remain. What evidence is most useful and reliable? Which options are based on the strongest evidence? Do we have enough evidence to make confident decisions?

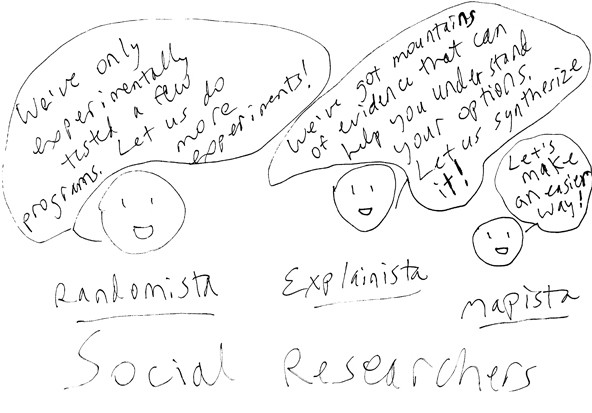

Social researchers generally take one of three general approaches to these questions:

1. “Randomista” Evidence Registries

Both critics and proponents of this approach have termed it “randomista,” because it views randomized experiments and related “quasi-experimental” research designs as the only reliable evidence for choosing programs.

Are you enjoying this article? Read more like this, plus SSIR's full archive of content, when you subscribe.

This approach relies on registries like the Substance Abuse and Mental Health Services Administration’s National Registry of Evidence-Based Programs and Practices, which contains evidence for interventions tested with experiments and quasi-experiments that are searchable by various criteria. My search for evidence on “mental health promotion” programs for “older adults” in “urban” areas “living at home,” for example, produced a list of 40 programs. For each program, the site reports whether it showed “effective,” “promising,” on “ineffective” results on measured outcomes such as depression, disruptive behavior disorders, and healthy relationships.

Randomized experiment registries show tested programs and outcomes.

Randomized experiment registries show tested programs and outcomes.

These studies are helpful for answering one important question: Are our programs having the effects we think they are? However, they omit rich information about impacts that other types of evaluation can capture. They don’t indicate what about the program worked or didn’t work, for whom, or why. They also don’t show whether there were any unexpected effects. Without this additional information, it’s hard to know what will work in your unique situation to meet the unique needs of the people you serve, and most researchers can’t afford to wait for a comprehensive set of new experiments to fully answer every question.

2. “Explainista” Evidence Syntheses

Proponents of the “explainista” approach believe that useful evidence needs to provide both reliable data and strong explanation. This often means synthesizing existing information from reliable sources—such as nonexperimental and experimental studies published in academic journals, books, and reports—to save time and money.

Done well, this technique can answer many questions, including why a program was successful or not (was it the staff, partner organizations, or strategies for recruiting participants?); what the expected and unexpected effects for people, organizations, and communities were; and what recommendations people made for improving the program. This information provides a more complete picture and highlights effective “levers” that can inform decision-making.

An evidence syntheses can answer many questions.

An evidence syntheses can answer many questions.

(Two techniques for assessing a huge pile of evidence are David Gough’s “Weight of Evidence” framework and the inclusive approach described in the Department for International Development paper, “Broadening the Range of Designs and Methods for Impact Evaluations.” These techniques consider multiple aspects of research quality, such as: Did the study use appropriate methods to answer the questions? Did it use proper procedures for each method? Do the study’s data support the conclusions?)

This approach, however, also has its limitations. Finding an existing meta-study on your topic is unlikely, and even if you do, it might not include information relevant to your specific situation. And although a literature synthesis is typically faster and less expensive than a series of new experiments, few organizations have the resources to exhaustively search and synthesize studies from all literature databases and search engines.

3. “Mapista” Knowledge Maps

A newer approach—“mapista”—takes the “explainista” approach a step further and creates a more holistic knowledge map of a policy, program, or issue. Integrating findings from relevant studies into a map, researchers can visualize the understanding developed in each study, where studies agree, and where each study adds new understanding. This lets stakeholders quickly assess choices for action, including trade-offs, alternative options, and potential consequences.

Knowledge maps visualize explanations from related studies.

Knowledge maps visualize explanations from related studies.

A more complete map might include dozens or hundreds of relevant causes, effects, and links between them (see our map of Presidential candidates’ economic policies). While this may initially sound like information overload, researchers can make a good map searchable and customizable so that various stakeholders can focus on relevant sub-topics, such as policy issues, outcomes, or the roles of partnering organizations. More information supports more-effective decisions, collaboration, and messaging.

A new analytical method, Integrative Propositional Analysis, even lets researchers quantify how complete the explanation a map provides is, and assess where the evidence is strong and where it is weak.

Increased use of the “mapista” approach suggests that researchers and practitioners are shifting away from expensive new studies toward the effective synthesis of existing research. This is not only more cost-effective, but also more reasonable, given our data-rich world, and it has the potential to greatly increase the effectiveness of policies and programs for the people we serve.

Support SSIR’s coverage of cross-sector solutions to global challenges.

Help us further the reach of innovative ideas. Donate today.

Read more stories by Bernadette Wright.