When leaders at the California Endowment (TCE) decided to “hit the pause button” and commission an external assessment of their most ambitious place-based initiative to date, three years in, they sought an evaluation approach that recognized the complexity of their strategy. TCE designed the initiative, Building Healthy Communities, as a 10-year, billion-dollar effort to create equitable communities in 14 sites across California. Because contextual factors at each site would necessitate local adaptation and the strategy overall would continue to evolve, “off-the-shelf” evaluation of the initiative and its outcomes would simply not suffice.

More than halfway across the country, another foundation faced a similar dilemma. The Grand Rapids Community Foundation (GRCF) was engaged in a complex, multi-faceted effort to improve college access and success for a whole generation of disadvantaged students. The initiative, Challenge Scholars, would offer “wrap-around supports” (engaging schools, colleges, businesses, and other community institutions) for students and their families, helping prepare students academically, socially, and emotionally to succeed in higher education. The evaluation of the initiative would have to recognize the involvement of various partners and adapt to an ever-changing environment.

TCE and GRCF are just two funders who are actively “shifting the lens” in terms of how they are looking at evaluation in the light of complexity. They are building on the recognition that a traditional approach to evaluation—assessing specific effects of a defined program according to a set of pre-determined outcomes, often in a way that connects those outcomes back to the initiative—is increasingly falling short. There is a clear message emerging that evaluation needs to accommodate complexity, not “assume it away.”

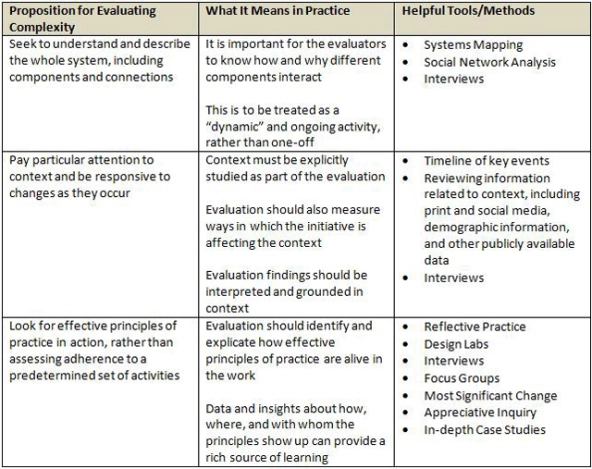

My colleagues at FSG and I have, based on our work with TCE, GRCF, and numerous other clients, articulated a set of nine “propositions” in a recent practice brief that are helpful in guiding how we conceptualize, design, and implement evaluations of complex initiatives. We derived these propositions from what we now know (based on the emerging field of complexity science) as distinctive characteristics of complex systems. We know, for example, that complex systems are always changing, often in unpredictable ways; they are never static. Hence, we need to design evaluations so that they are adaptive, flexible, and iterative, not rigid and cookie-cutter.

Below are three of the propositions in more detail, along with tools and methods that can help apply the proposition in practice.

It is important to note that many of the traditional tools and methods that form the backbone of sound evaluations—such as interviews, focus groups, and surveys—are still relevant. We would, however, suggest that organizations adapt those methods to reflect a complexity orientation. For example, interviews should explore the role of context; we should not confine them to the initiative’s boundaries. Focus groups should seek to understand local adaptation, not just adherence. And surveys should probe for relationships and interdependencies, not just perceived outcomes. In addition to traditional methods, we suggest incorporating newer, innovative techniques that provide a richer narrative, including:

- Systems mapping—an iterative, often participatory process of graphically representing a system, including its components and connections

- Appreciative inquiry—a group process that inquires into, identifies, and further develops the best of “what is” in organizations

- Design thinking—a user-centered approach to developing new solutions to abstract, ill-defined, or complex problems

As social initiatives get more complex and strategies become more emergent, we need to open up conversation about what it would take for evaluation to continue being relevant and useful. This would allow funders and nonprofits engaged in complex social change to benefit from their evaluation and learning efforts by investing in the right kinds of evaluations.

Read more stories by Srik Gopal.