Recently, we have been seeing widespread rejection of experts and evidence. From the election of the first president in US history to have neither government nor military experience to the rise of fake news, evidence and expertise are getting short shrift. This is a perilous trend, and we need to fight against it, both in general and in the social sector, where making better use of evidence and increasing its role in decision-making is crucial to achieving social change at scale.

Consider: social sector organizations everywhere are under increased pressure to maximize their resources, whilst funders and investors want to maximize the best usage of their money to best meet growing need. Efficiency is therefore key. But efficient operations need evidence to stay on track. Evidence can reveal why and how approaches have or haven’t worked. Good monitoring and/or evaluation can thus inform program improvements and revisions, guide future activities and development, bolster efforts to raise awareness of an issue, educate the sector and those outside it, and influence funding decisions.

Ignore evidence, or keep lessons to ourselves, and we may find ourselves believing in false economies and then misallocating resources. As a result, we may achieve less than we’re capable of, or even, in a worst-case scenario, harm the people or causes we intend to help.

Proportionate evaluation

In the best-case scenarios, social service organizations use evidence, and draw on users for feedback and input to inform decision-making about the needs the organization is focused on meeting, and its approach. These organizations also gather evidence to learn how their interventions fit in alongside existing external activities. A successful impact management approach, in other words, involves appropriate evaluation, and regular adaptation in response to data, as well as regular knowledge sharing to inform all stakeholders and the sector at large.

It’s also proportionate, in that it reflects the level of available evidence, the evidence requirements of your situation, and the stage of development of the initiative being considered (from innovation through to replication). We do not need trials in every school to prove the positive impact of education—instead we monitor quality, and the effectiveness of various new approaches. And while we will need to evaluate a new school-based peer support intervention, for instance, we may not need to utilize the same type of evaluation in every school in which it is ever implemented.

A well-functioning evidence system

The development of shared approaches has its risks. (Developing common measures, for example, can lead to losing nuance.) But, done right, it can ease the gathering and sharing of evidence—no matter its nature—which in turn brings fantastic opportunities for sharing learning and ultimately, increased impact.

Ideally, new evaluation activity will build on existing evidence (rather than duplicating it), and organizations will strike a good balance between looking back (retrospective consideration and measurement of ‘what works’) and looking forward (focusing on learning, taking action, and iterating to develop new and potentially more effective solutions).

Funders should find those same sorts of data streams equally useful. Donors tend to prioritize their own requirements and evaluation measures over listening to their grantees. But that’s less of a problem if they are sufficiently engaged with their ‘end’ users and the relevant data and learning for the sector they fund. In fact, if funders are engaged with the beneficiaries they hope to serve, and if they are considering relevant data, they may be better able to spot evidence gaps, and more willing to seek input from the organizations they support.

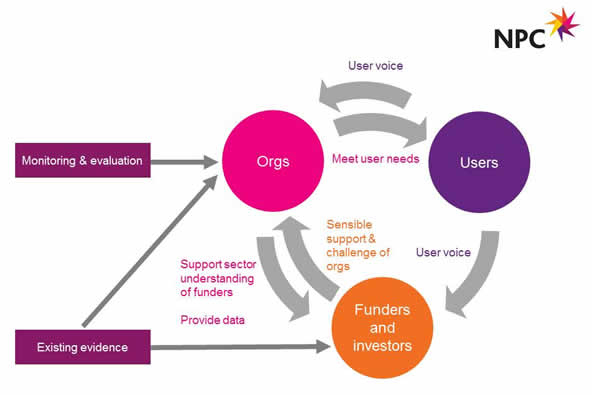

Creating a virtuous circle (shown in Figure 1) is a good goal. With this approach, organizations are empowered to work in partnership with their funders and philanthropy is more informed with immediate evidence, and thus more in touch with target beneficiaries. A well-functioning, evidence-informed system involves timely use of existing, relevant data and sensible generation of new data by all key players.

The virtuous circle of social intervention: a well-functioning, evidence-informed system. (Image by New Philanthropy Capital)

The virtuous circle of social intervention: a well-functioning, evidence-informed system. (Image by New Philanthropy Capital)

Harnessing new trends in evaluation practice

There is some good news to relate on this front. The Center for Effective Philanthropy recently reported an increasing focus among large-foundation CEOs on just such collaborative and evidence-based practices. And our new NPC report, “Global Innovations in Measurement and Evaluation,” attests to changes in both measurement and evaluation practices, with a growing emphasis on shared approaches and user-centric evaluation. This is encouraging to see. (One example is The Palliative Care Outcomes Collaboration in Australia, which uses aggregated data from standardized assessment tools to understand and improve outcomes for palliative care patients, and to report on progress across the palliative care sector.)

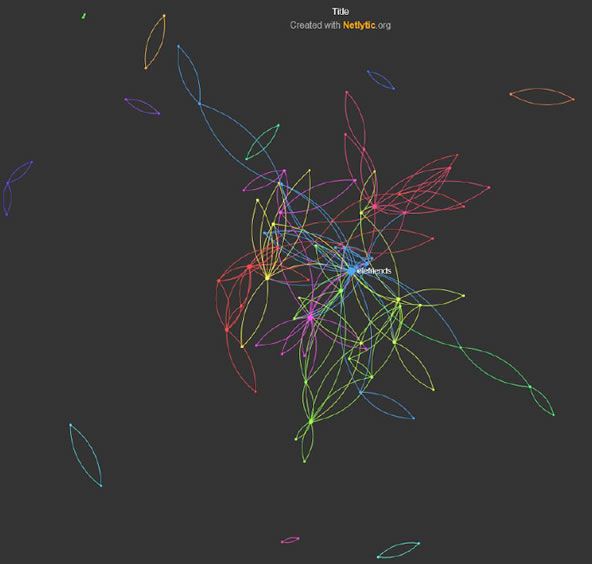

But we need to leverage this momentum. We also need to ensure that the social sector capitalizes on recent advancements in big data and remote sensing, and data visualization to share data more clearly and quickly, and bring the full power of data and analysis to bear across an increasingly large range of social issues. As an example, consider how network analysis performed using the Netlytic tool contributed to an evaluation of the UK Mind Elefriend program—an online forum providing peer-to-peer mental health support. The results, shown in Figure 2, revealed that users tended to group into relatively small clusters instead of forming wider peer networks—an insight that has helped the charity determine how best to spread information across the forum.

“Social Media and Austerity: Online Peer Support in Mental Health Communities”)"> Each point in this network analysis visualization represents one Elefriends user. The colored segments show wider clusters. (Image from “Social Media and Austerity: Online Peer Support in Mental Health Communities”)

Each point in this network analysis visualization represents one Elefriends user. The colored segments show wider clusters. (Image from “Social Media and Austerity: Online Peer Support in Mental Health Communities”)

We in the social sector can do better with our use of evidence. And we can surmount some, if not all, of the evaluation challenges that we face, if we put our hearts and minds to it. If we make the most of our shared expertise, and ride on the growing trends, we can achieve a more user-centered and effective social sector.

Read more stories by Anne Kazimirski.