Last November, the US House of Representatives unanimously passed the Foundations for Evidence-Based Policymaking Act of 2017 (HR 4174), and it looks slated to pass the Senate this year. In an era known for the absence of legislative consensus, this bipartisan bill addresses one of the few issues on which there is near universal agreement: To improve the effective delivery of social policies and programs, we need the efficient production and use of rigorous evidence to become a routine part of government operations and public policymaking. Although government has long collected administrative data, increasingly in digital form, government agencies have struggled to create the infrastructure and acquire the skills needed to make use of sensitive and personally identifiable information to improve governing.

As urgent as the legislation’s provisions are to support more evidence-based policymaking by mandating an inventory of all government data, the bill focuses exclusively on enabling government to use its own data better without considering the needs of nonprofits. This is a mistake.

Nonprofits represent more than five percent of GDP in the United States, and provide direct social services and assistance to millions of people, often with the support of government grants. If we do not make the same data infrastructure—including tools, governance mechanisms, and trained personnel—available to the social sector, it is as if we are building a road designed for trucks, but not cars. Before we can ensure that taxpayer dollars are well-spent on programs that work, we need to create a data infrastructure that enables both government agencies and nonprofits to use administrative data to evaluate their programs.

Fortunately, there exists the means to do so without new legislation, namely by enabling the nonprofit sector to take advantage of “data labs”—systems governments and universities are building for program evaluation that securely provide administrative data for research and evaluation purposes.

What Exactly Is Administrative Data?

Governments around the world collect and process troves of so-called administrative data—personally identifiable information about the people it serves. In the United States, the Social Security Administration records social, welfare, and disability benefit payments to nearly the entire US population. State governments collect computerized hospital discharge data for both government (Medicare and Medicaid) and commercial payers. Use of personal administrative data for research and policy development is not new. Over the years, administrative data has served as evidence for many academic research projects studying, for instance, the impact that simplifying the college financial aid process has on access to education, or the influence housing vouchers programs have on moves to lower-poverty neighborhoods. But until recently, academics and research organizations have had to jump through hoops to access administrative data for evaluation. Some government departments take a progressive view of data use and have established data sharing gateways, while others are extremely risk averse.

The Rise of the Data and Policy Evaluation Lab

But as the private sector increasingly understands the value of data and data linking (combining different sources of data about a common person or group or geographic area), as well as the analytics and insights that can be gained from it, government departments are increasingly more supportive of data collection and analyses models that enable secure access to administrative data while maintaining privacy. They are starting Data Labs or Policy Evaluation Labs designed to make administrative data easier to combine, use, and share ethically and responsibly.

Washington State Institute for Public Policy, for example, carries out policy evaluations and cost-benefit analyses for state policy projects at the behest of the Washington State Legislature using state data. The Rhode Island Innovative Policy Lab, a collaboration between the Rhode Island Governor’s office and Brown University, brings together government officials and researchers and administrative data to perform policy evaluation, and to design and test interventions. Actionable Intelligence for Social Policy at the University of Pennsylvania supports states in creating integrated data systems that link individual-level administrative data across multiple agencies to enable better evaluation of government programs and policies. And a collaboration of universities across the United Kingdom—the Administrative Data Research Network—has led on developing safe models for data sharing. It has also influenced new government legislation (Digital Economy Act) to improve access for government departments, as well as external researchers to administrative data. (For more on data labs, see the GovLab-NPC case studies on data labs.)

The UK Justice Data Lab Model

Charities, like academics, have had difficulties in accessing administrative data for research and evaluation purposes. In addition, underdeveloped analytical skills and low capacity across the sector act as a barrier to effectively use data that would enable charities to understand whether the services they provide have the intended impact.

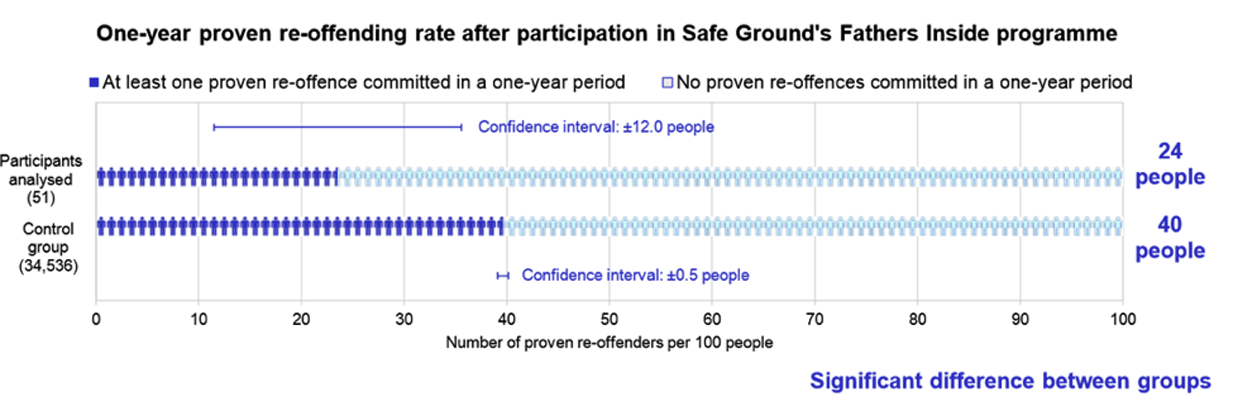

Responding to these challenges, in 2013 NPC collaborated with the Ministry of Justice to set up its Justice Data Lab (JDL), a secure facility within the Ministry of Justice in the UK. It allows service providers to evaluate the impact of their intervention by sending their data to the lab, whose small team of trained staff compare outcome variables of a matched control group who did not receive the same services and provide aggregated statistics back to the service provider. For example, Safe Ground is a charity that works with offenders/ex-offenders to improve their relationship skills. In December 2016, its Fathers Inside program was shown to statistically reduce reoffending compared to a matched control group. This was thanks to an evaluation conducted by the JDL, without the need to give any confidential data to the charity.

Safe Ground’s Fathers Inside program. (Image courtesy of the Ministry of Justice, 2016) "> Re-offending behavior after participation in the Safe Ground’s Fathers Inside program. (Image courtesy of the Ministry of Justice, 2016)

Re-offending behavior after participation in the Safe Ground’s Fathers Inside program. (Image courtesy of the Ministry of Justice, 2016)

NPC’s experience of facilitating data labs has shown that measuring a shared social sector outcome, such as whether a service contributes to reduced reoffending, makes research directly relevant and quickly applicable to many organizations.

Policymakers and procurement officials also benefit from the UK data lab model. They can use results for outcomes-based procurement, as well as better decision-making; as the evidence of interventions that are likely to succeed grows, they can do more meta-analysis to understand which are the most effective programs. Researchers can use data lab reports to supplement program evaluations and use saved timed to focus on qualitative results.

Ultimately, the core reason for the data lab is to encourage social service providers to evaluate their provisions so that service users receive effective care. Participating organizations receive not only accessible and statistically robust reports, but also a clear route to accessing this analysis, insight into the effectiveness of their programs, and evidence to share with commissioners.

Since April 2013, 204 interventions have been evaluated through the JDL. The provision of standardized, high-quality statistical reports has developed the JDL staff’s data science and communication skills (skills used across the department). Staff are continually refining and testing their analytical models, and engaging with charities to understand the context of the projects they evaluate. Further, the JDL has a maximum of five staff who use existing paid or open source software—meaning the cost of the service has been efficient compared to commissioning 204 separate evaluations.

Giving Charities Access to the Same Infrastructure

New state-level data labs should retool—with philanthropic sector support—and enable US-based and other policy labs to offer their analytical services and data access to the social sector by adopting the JDL model. This offers numerous advantages. First, it keeps the personal data of individuals securely within the government department while organizations receive aggregated results in a public report, thereby protecting the privacy of individuals. Second, it provides access to the trained personnel needed to conduct the evaluations without the charity having to hire those people directly. Third, it offers an efficient system for generating standardized evaluations. With the linked and integrated datasets in a secure facility, there is still the option for accredited researchers to conduct further research. But making this data accessible to charities could create a powerful, yet practical asset for understanding the mechanisms of social impact.

Although the JDL was initially marketed to the charity sector, it has been open to all users, with enthusiastic take up among private and even public sector organizations. This indicates that charities are not alone in their lack of capacity and capabilities to work with complex administrative datasets. The fact that prisons have used the JDL to evaluate their programs, even though they are in a better position than charities to access government data, speaks volumes.

By failing to recognize the contribution that charities can make to the development and application of research, the potential for administrative data to do good will be unnecessarily restricted.

Read more stories by Tracey Gyateng & Beth Simone Novek.