This post is from PopTech Editions III—"Made to Measure: The New Science of Impact," which explores the evolving techniques to accurately gauge the real impact of initiatives and programs designed to do social good. Visit PopTech for more interviews, essays, and videos with leading thinkers on this subject.

Passeggiata nel deserto - North Horr (Image: Luciano Rizzello)

Passeggiata nel deserto - North Horr (Image: Luciano Rizzello)

It is 1987. I am leaning against a Land Rover in the Chalbi Desert, in northern Kenya, about 15 kilometers south of Ethiopia. The dry, heavy heat feels like the inside of a pizza oven. Sand whips around on gusts of torrid air, stinging my exposed skin.

A program leader and I are speaking with members of the Gabbra community, nomadic camel pastoralists who live in this ascetic land. We are discussing the one thing the Gabbra need more than anything else to survive out here: water access. We are pleased to learn that the Gabbra are benefiting from better water supplies provided, in part, by our initiative.

I remember how glad I was that our work was making a difference. As the Gabbra move off, I turn to the program lead, Dilly. “What should I learn and remember from all this?” I asked.

Dilly is an old hand here, hardened by years of exposure to sun, sand and wind. He is wise and thoughtful. “Always be willing to look back and ask hard questions about what you have done,” Dilly said. “In the end, you are intervening in people’s lives, and that carries enormous responsibility.”

I’ve tried to follow that advice through the years, and I have come to learn that he was actually speaking about two separate things that day as we chatted in the desert. The first is the responsibility to honestly and accurately monitor impact. Organizations are responsible for the work that they have done. That responsibility is to the people who were supported—and not to donors.

Monitoring to me has always been the regular and rapid collection of qualitative and quantitative data that allows me to reflect on programmatic progress and impact over a long period of time. The real value of monitoring is looking back well after a project has been completed to learn what happened over the long term.

"la terre" (Image: Meaduva/Flickr)

"la terre" (Image: Meaduva/Flickr)

The point of a water project, after all, is to make sure that water flows—forever. That is what is meant when organizations use the word “sustainable.” Sustainable means that clean water becomes expected, not rare. It means that inevitable technical and social problems are addressed as they emerge so that supply disruptions are minimized. It means that systems and finances are in place so that water infrastructure can be replaced when needed. Organizations will only know these key results if they bother to stick around, look, listen, reflect and learn.

It is perhaps no coincidence that the development community’s biggest successes—eradication initiatives like polio and smallpox—are precisely the ones that made monitoring central to their work.

Sadly, that kind of vigilance is rare. “Monitoring” in the global development community more frequently consists of watching a program roll out. The same routine goes on time and time again: approach philanthropists, tell stories of hardship and suffering, suggest that your organization has a solution, accept donations, track only the implementation of that solution, and then celebrate when project implementation is complete.

"Life saving - drinking water" (Image: Julien Harneis/Flickr)

"Life saving - drinking water" (Image: Julien Harneis/Flickr)

Even among water and sanitation sector organizations that should know better, accurate monitoring has, to put it kindly, been elusive. Many organizations are endlessly “developing our indicators,” while others are forever bickering about a lack of agreed-upon ways to measure progress.

These kinds of excuses make less and less sense. Some agencies argue that they need to be paid for this long-term monitoring work, but financing has never really been a huge factor in my mind or experience. With water, if you have paid staff, vehicles and petrol, then monitoring is possible. The explosion of technologies like cell phones and the long-overdue recognition that people on the ground can actually comment on their own development make other excuses invalid.

But that day in the desert, Dilly was also talking about how to run an organization. Monitoring over time is a statement that impact matters, that organizational improvement matters, and that reflecting honestly on results—good or bad—will guide how we impact the people we work with around the world. Development is fluid. The best organizations are those that take information and use that information to improve. It’s an organization driven by problem solving rather than ideological adherence to “our model.”

Those kinds of organizations are nimble, dynamic, critical, thoughtful and responsive. Monitoring is not a stick to bash people for bad results, nor is it a shiny lure to entice donors. It’s a vital tool for organizational improvement and innovation.

Organizations that do this well build on strengths and embrace the challenge of addressing weaknesses. They let the staff closest to the ground drive solutions with a clear eye on audacious programmatic goals. They understand that data points mean little on their own. Instead, they think in terms of movement and trends, and focus on constantly improving and getting trends headed in the right direction. They do not blink at “customer feedback” and they find creative ways to make data visual for communities to engage and comment. Bad news does not shock or scare but rather inspires. That is how companies operate and succeed, by innovating and then constantly evaluating feedback.

This all works best if the target of monitoring is not an external organization—even though external agencies can benefit from results and reflections. The focus has to be on internal improvement, with a goal of improving the lives of the people we work with and not pleasing donors.

In contrast, failure is not monitoring, not learning, not reflecting and changing.

Building dynamic, problem-solving, monitoring-focused organizations like this is not easy, but when it happens then transformative programming becomes possible.

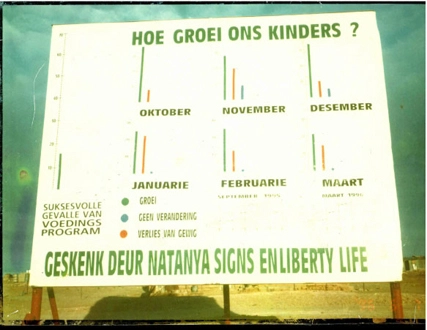

"The data on this picture is my favorite because the results are not great." (Image: Ned Breslin)

"The data on this picture is my favorite because the results are not great." (Image: Ned Breslin)

I’ve tried to apply Dilly’s advice throughout my career. In the 1990s I was working on an integrated nutrition program targeted at poor communities throughout South Africa. We put a billboard at each entry point to a community that graphically showed seasonal fluctuations in child growth. The data on this picture is my favorite because the results are not great—they show spurts of unhealthy weight loss (red) scattered throughout the year. The target of this monitoring work was my organization and the participating communities, and it allowed us to jointly explore what we needed to improve. We could also put all the data from each individual community together and come up with regional and national results that showed at a macro scale how we were doing, what lessons were being learned, and how all this was trending. But the real work was at grassroots level, and the power of monitoring and an organizational commitment to adaptation and change meant that field staff had huge authority to address the problems identified locally.

The best organizations focus on the constantly changing environment around them and do not fear so-called bad results. That is so vital because in development work we are intervening in people’s lives, and as Dilly said so wisely, that comes with enormous responsibility.

Read more stories by Ned Breslin.