Tewodros Melesse became director general of the International Planned Parenthood Federation (IPPF) in September 2011. The organization was then in the midst of an ambitious effort to boost the performance of its member associations—to encourage and enable them to deliver more services to more clients in more parts of the world. The initiative was only about a year old, but Melesse felt a keen sense of urgency. Previously, while serving as director of IPPF’s Africa region, he had learned just how important performance was to the future of the organization and its clients. In his home country of Ethiopia and in other parts of the region, performance lapses by IPPF associations could mean that thousands of people would not receive crucial care. So he set an audacious goal for the federation: By 2015, it would increase the number of sexual and reproductive health services that it delivered to 176 million. That figure would represent a doubling of the number of services that IPPF had delivered in 2010.

In mid-2010, IPPF leaders had seen the need to tackle a major federation-wide challenge. Indeed, IPPF was at risk of entering a period of potentially terminal decline. Some IPPF member associations had failed to evolve as the world around them had fundamentally changed, and as a result IPPF’s value proposition to funders had eroded. “We had to take a look at how we operated and drive more productivity for each donor dollar. We had to boost our performance. If we didn’t, we were in danger of failing vulnerable groups often forgotten by other organizations,” says Melesse. Perhaps the most daunting task for IPPF leaders was to persuade member associations to improve performance. A push to retool the organization for the 21st century would work only if every association bought into it.

Today, Melesse looks back with pride at what IPPF accomplished over the past four years. Not only has it greatly increased its level of service delivery around the world, but it has also nurtured a new performance culture. In fact, performance has become a focus of conversation throughout the federation—from the IPPF boardroom down to clinics in remote parts of the world. As a result, member associations can better articulate their value proposition to funders, and they’re able to make more-informed management decisions.

Performance-based funding, capacity building, delivering “value for money”: These have become hot topics in the fields of philanthropy, foreign aid, and international development. In an age of austerity, organizations are feeling pressure to do more with less. IPPF’s experience suggests that even large, diverse, and complex global institutions can succeed in this new environment. They can do so by working from the ground up—by matching carefully designed incentives with practical tools in a way that fits their organizational culture.

Facing a Risk-Filled Future

IPPF, founded in 1952 and based in London, is a global organization whose mission is to improve women’s reproductive health. Its grassroots member associations provide family planning services, sexual health care, comprehensive sexuality education, and access to safe abortion. They also promote sexual and reproductive health through their advocacy efforts. Member associations work with hard-to-reach populations in some of the world’s toughest environments—post-hurricane Philippines, war-torn Syria, refugee camps in Uganda, and brothels in Bangkok, for example. In many places they are the only provider of sexual and reproductive health services. Their mission involves serving some of the world’s most marginalized groups: the poor, young people, sex workers, men who have sex with men, people who inject drugs, and prisoners, among others. In fact, according to IPPF, four out of every five people who use its services belong to one of those marginalized populations.

A client gets contraceptive advice from an employee at an IPPF-affiliated office in the town of Hebron. (Photo by Graeme Robertson, courtesy of IPPF/Palestine)

A client gets contraceptive advice from an employee at an IPPF-affiliated office in the town of Hebron. (Photo by Graeme Robertson, courtesy of IPPF/Palestine)

By 2010, a combination of factors was putting the future of IPPF and its member associations at risk. As a result of population shifts, some well-established IPPF clinics had become significantly less able to serve potential clients. Kampala, Uganda, for example, saw its population more than double over the course of two decades as migrants streamed into the city from rural areas. As the city grew, some women found that they had to travel farther to access IPPF services; many of them, unable to make the journey, were left without basic reproductive care. The international development community, meanwhile, had given less and less emphasis to issues such as family planning and safe abortion access. The initial version of the Millennium Development Goals (issued in 2000) made no reference to sexual and reproductive health, for example.

Those challenges, moreover, came to a head just as the funding landscape for IPPF was beginning to change in profound ways. In the early 2000s, several important donors switched their attention from reproductive health services in general to the treatment of HIV/AIDS in particular. The US government, for its part, cut funding for IPPF because the federation provides abortion counseling and abortion services.

During and after the recession of the late 2000s, aid agencies came under intense pressure from taxpayers to stretch every penny. In 2008, the UK Department for International Development (DFID) launched the Independent Commission for Aid Impact, a watchdog group that evaluates aid projects for their effectiveness and value. Other aid agencies took similar steps. The US Agency for International Development began disbursing some funding for health in developing countries on a pay-for-performance basis, and the director general of the Swedish International Development Cooperation Agency announced an effort “to introduce a more results-based approach to management.”

In addition, donors expressed concern about the variability in performance across the federation. “At the central level, there’s no question: IPPF is excellent. But at the country level, it’s very uneven,” one funder noted. Another funding partner observed, “There are some member associations that are great, while others are not so good.” Pressure from donors sent a strong signal to IPPF leaders at both a central level and a regional level. “We were operating in a funding environment that was dramatically different,” Melesse recalls. “We knew that if we didn’t up our game, we were in danger of falling. We had to act and act fast.”

Designing for Flexibility

The federation encompasses 152 independent member associations. Those associations employ more than 30,000 staff members, and they provide services in 172 countries in six regions (Africa, Arab World, East and South East Asia and Oceania, European Network, South Asia, and Western Hemisphere). The performance of those associations was highly uneven: Each of them had a different level of capacity and offered a different array of services.

Some associations were models of effectiveness. The association in Bolivia, for example, had implemented a sophisticated enterprise technology solution to manage its operations in real time. It set annual performance standards for clinics, and it held frequent meetings to identify opportunities to increase efficiency and financial sustainability. Other associations, however, still operated much as they had for several decades. They had limited resources to invest in new systems, relying instead on large metal file cabinets that overflowed with paper records. In that kind of environment, shortages of contraceptives and limited service options were the norm.

The focus within IPPF on serving hard-to-reach locations further complicated efforts to monitor performance. Tracking clinic-level data, for example, could be particularly challenging when the “clinic” was a tent in a refugee camp or a box on the back of a motorcycle. Staff members at that level often struggled to report the number or kinds of services that they delivered. Lacking such data, associations found it difficult to make informed management decisions or to present a compelling case to donors.

IPPF has a 24-member governing council and six regional directors, along with a central office in London that includes the director general and other executives. In addition, there is a secretariat, which encompasses the central office and the six regional offices. Together, those entities set policy for the federation. But each member association has its own leadership, its own governing board, and its own history. A for-profit corporation as large as IPPF could simply order its branch offices to implement a new policy, but as a federation, IPPF faced a much tougher challenge. Its leaders had to persuade each association to implement any new federation policy; they couldn’t dictate that policy from above.

During the period when IPPF began to confront its performance challenge, its director general was Gill Greer. (She served in that role until her five-year term ended in the fall of 2011. That year, the IPPF governing council appointed Melesse to take her place.) Greer and her colleagues saw that many potential solutions to that challenge would be deeply painful to implement. IPPF could, for example, push financial responsibility down to individual associations, making them fully accountable for raising funds and therefore more sensitive to donors’ demands for performance. But doing so would run the considerable risk that some associations might simply fail. More drastically, IPPF could expel lower-performing associations from its network. That approach would drive greater accountability and increase the network’s average performance. But going that route would also impair IPPF’s commitment to underserved women, undermine its ability to speak with a truly global voice, and threaten the very nature of its federated structure.

Women gather at an improvised IPPF-affiliated family planning clinic after an earthquake in 2010. (Photo by Toan Tran, courtesy of IPPF/Haiti)

Women gather at an improvised IPPF-affiliated family planning clinic after an earthquake in 2010. (Photo by Toan Tran, courtesy of IPPF/Haiti)

Greer and other federation leaders concluded that they needed to adopt a flexible approach. What worked for Bolivia wouldn’t work for Bhutan, and what made sense in the Netherlands wouldn’t make sense in Nigeria. Equally important, the federation had to accommodate the dedication of its member associations to helping those trapped in dire situations and to advocating for reproductive rights and quality health care. Unlike other aspects of association performance, those activities are not readily measurable.

In August 2010, in response to the external and internal challenges that IPPF faced, Greer created a task force whose mandate was to build a performance culture from the ground up. John Good, finance director of IPPF, led the task force. Joining him were staff members from the secretariat in London and people from each of IPPF’s six regions, along with several external consultants. (Redstone Strategy Group, a consulting firm that works with clients in the social sector, provided technical assistance, analysis, facilitation, and project management. The William and Flora Hewlett Foundation, a longtime supporter of IPPF, funded the design and pilot-testing phases of the effort.) As the task force got under way, Good summarized the core challenge that it would seek to overcome: “We’ve not tied our investments and outputs together. We need to deal with the culture of the organization.”

Mounting a Principled Response

One significant form of influence that IPPF has over its member associations is its control of centralized funding. Each year, the federation raises more than $100 million that it then distributes to associations around the world. Over time, it had come to dispense much of this funding on the basis of how much money each association had received the previous year. As a result, the link between performance and resource allocation had become tenuous. The most successful associations (such as those in Cambodia, Ethiopia, and Indonesia) raised all or most of their funds on their own. But many associations had grown comfortable with receiving an annual grant from the secretariat, regardless of how well they used it. Those associations had little incentive to make performance improvements.

Developing a new funding system—one that would use incentives to drive performance improvements—was therefore a primary goal of the task force led by Good. Such a system would require a set of tangible, verifiable indicators that IPPF and the member associations could clearly track. But Good and his colleagues knew that simply rewarding member associations for the quantity of services that they provided would not work. Any performance-based resource allocation system would need to honor the values of IPPF.

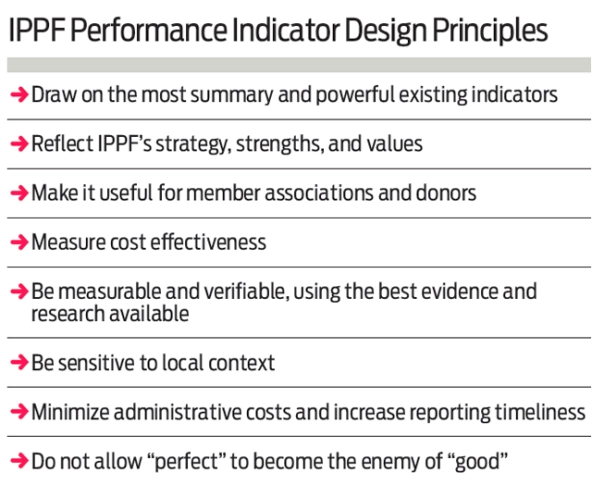

So before designing the new system, Good and his colleagues hammered out a set of overarching principles to guide that effort. The system would need to make it easier not just for the secretariat to make funding decisions, but also for individual associations to measure their own performance. In creating the system, IPPF needed to be sensitive to the circumstances of each local environment, and it could not assume that different associations in different regions could achieve the same results. The federation could not let financial incentives compromise its mandate to provide high-quality services to those who need them most, and it needed to ensure that performance in the areas of advocacy and comprehensive sexuality education would be on an equal footing with more easily quantified results. (See “IPPF Performance Indicator Design Principles” below.)

Agreement on these principles allowed staff members and stakeholders from all parts of the federation to discuss and evaluate a wide range of performance indicators. By April 2011, the task force had drafted a list of indicators: “number of HIV-related services provided,” “number of couple years of protection,” and so forth. That spring, Good and other task-force members traveled around the world to pilot-test those indicators. They made site visits, for example, to the associations in Bolivia, Cambodia, Ghana, India, and Uganda—associations that covered different IPPF regions and represented different levels of organizational scale and maturity. During this phase, they worked to align the draft indicators with IPPF’s goals and principles, and they identified and resolved obstacles to making the indicators work in various local contexts.

Following a series of region-level meetings in Africa, South Asia, and the Western Hemisphere, the task force was able to finalize a set of 10 indicators. IPPF had previously established a strategic framework that is built around “five As”—adolescents, AIDS, abortion, access, and advocacy—and the task force aligned the 10 performance indicators with those five strategic priorities. (One of the indicators related to “adolescents,” for instance, was “provision of essential elements in sexuality education program.”)

Embracing Incentives

Despite the effort by Good and his team to build a system that would be broadly acceptable, that system did not win immediate approval from all quarters. The task force reported receiving “strong pushback” from some parts of the federation. At a handful of larger associations, for example, people resisted the idea that they should have to conform to standards designed for smaller and often less effective associations. IPPF, in short, faced a challenge that is especially acute in a federated structure: It wasn’t enough just to know the best way forward. Now the federation had to earn the support of leaders at the association level.

Toward that end, IPPF leaders planned to conduct a multi-year pilot of the performance-based funding system. They would launch the pilot in late 2011 with 8 member associations. The following year, they would expand it to include 25 to 30 associations, and in 2013 they would roll it out federation-wide. Varun Anand, operations and finance director for the South Asia region, largely attributes the success of the system to this staged rollout: “The staggered approach and the group consultation in the development stage secured complete buy-in from regional and member association boards and senior management.”

Along with giving IPPF leaders a chance to fix any kinks in the new system, the pilot had another benefit: It created a cohort of early champions. Anand notes that a “great boost” in support among leaders at the association level occurred once they were able to see the system in action. Many of them, Anand explains, had worried that a quantitative system would undermine IPPF’s commitment to “providing rights-based services, information, and programs.” But observing how the IPPF task force had balanced quantitative with qualitative indicators helped put that concern to rest. Ultimately, then, the task force had succeeded in laying the groundwork for widespread adoption of the new system. “By deciding to develop the performance-based funding system from the bottom up, visiting a variety of member associations and regions, and enabling local management to make a strong contribution, we ensured a robust system with good buy-in,” Good says.

The new system creates an incentive to improve performance by disbursing additional funds to associations that make progress in how they score on one or more of the 10 indicators. Any association, regardless of its starting level, that achieves a year-over-year improvement receives additional funding both for that improvement and for its contribution to performance gains made by its region. (If an association outperforms all other associations in its region, moreover, it will receive a larger reward.) By using a diverse array of indicators, the new system strikes a balance between serving IPPF goals and giving associations flexibility in how they meet those goals. An association that excels at providing services to adolescents, for instance, would be rewarded for its performance in that area while also being encouraged to start building a more balanced portfolio of services.

IPPF leaders have structured the incentive system to ensure that variations in funding levels are not so large as to create management problems. To prevent large fluctuations in funding, the federation limits any increase or decrease in disbursements to 10 percent per year. Yet even relatively small financial rewards can foster a performance-based culture. Such funding allows associations to expand their services, it enables them to create specific performance standards, and it brings the issue of performance to the top of managers’ agendas.

Retooling a Global Federation

By the summer of 2012, it was clear that the pilot was succeeding. Participating member associations were paying much greater attention to the collection and accurate reporting of performance data than they had previously. Melesse, now serving in his first year as director general, was eager to continue the initiative that Good’s task force had begun. He proposed rolling out the new performance-based funding system across the entire federation by the end of 2012—a full year ahead of schedule—and the IPPF board approved his proposal.

A nurse examines a child at an IPPF-affiliated family care center in the town of Eldoret. (Photo by Graeme Robertson/courtesy of IPPF/Kenya)

A nurse examines a child at an IPPF-affiliated family care center in the town of Eldoret. (Photo by Graeme Robertson/courtesy of IPPF/Kenya)

Yet it was not enough merely to create an incentive system. Many associations wanted to improve their performance but lacked the capacity for doing so. IPPF leaders recognized that the federation had to match the new demands that it was placing on associations with a commitment to helping them meet those expectations. Various resources to assist member associations were already in place. The federation provided targeted funds to support capacity building and technical assistance, for example, and it had recently implemented a management information system that standardized the collection and transmission of service data.

What was lacking, however, was a system that would serve not just the needs of IPPF leaders in regional offices or in the secretariat, but also the needs of leaders at the association level. For many years, performance measurement within IPPF had primarily taken the form of reporting by member associations to regional offices and then to the secretariat: Associations gathered service data under difficult conditions and sent that information up the organizational chain, but they received little in return for their effort.

In the fall of 2012, Good and his task force colleagues began rolling out a new instrument called the Branch Performance Tool. Through a series of workshops held in various regions, task force members showed association leaders how to use the tool, gathered feedback on its design, and steadily built support for it. Designed exclusively for the benefit of member associations, the Branch Performance Tool allows association leaders to review clinic- and branch-level performance and to identify opportunities for increased efficiency. Using easily available data, the tool provides simple ratios—clients per staff member per day, for example, or services per dollar—that enable leaders to compare the performance records of different branches. Leaders at Reproductive Health Uganda (RHU), for example, used the tool to discover that effective volunteer recruitment had helped one branch keep its costs very low in comparison with other branches.

Member associations now have an incentive to improve their data collection. In doing so, of course, they help the federation with its data collection efforts. But association leaders are in charge: The tool can guide them, but it doesn’t dictate to them. In fact, it empowers them. It identifies clinics that are weaker than comparable clinics and offers options for making those clinics more efficient. A clinic might shift some of its work from highly trained medical professionals to support-staff members, for example. Or, in a more extreme case, an association might relocate a clinic to an area where demand is higher or where costs are lower. The association in Thailand, for instance, found that the savings from moving one clinic to a lower-cost location would free up funding for an additional clinic. Association leaders, Anand notes, are now “able to take proactive decisions.”

Organizing data through the Branch Performance Tool enables association leaders to clarify the true cost of various activities and the trade-offs inherent in choosing one strategic path over another. That kind of information helps leaders make better decisions, and it also helps them demonstrate to board members and funders the value that their association provides. “We must constantly ask ourselves: Have we achieved what we need to [achieve] with donor funds?” says Lucien Kouakou, director of the Africa regional office. “We need reports that show donors our performance and show that we are improving.”

Talking About Performance

By the fall of 2013, Good and his task-force colleagues knew that their effort had paid off. “The federation has seen a strong improvement in performance over the past couple of years,” he says. “Performance-based funding and the introduction of tools such as the Branch Performance Tool have really helped to support this.” Over the previous four years, IPPF had doubled the number of sexual and reproductive health services that its member associations provided to clients—from 68.5 million in 2009 to nearly 137 million in 2013. The federation was well on its way to reaching the goal for 2015 that Melesse had established when he took office as director general. Indeed, so impressive were IPPF’s performance gains that Melesse decided to set an even loftier goal: Starting from the launch of the performance initiative in 2010, IPPF would aim to triple its level of service delivery by 2020. In just 10 years, it would go from providing 88 million services to providing 264 million services.

No doubt better data collection accounts in part for these higher performance numbers. Association leaders, after all, are now more likely to record all the services that their organizations deliver. “Across the federation, we have seen an improvement in data collection and data quality, which have had some impact on our service statistics,” says Good.

Yet IPPF’s new performance culture surely had a much greater impact on its ability to generate gains in service delivery. That cultural shift was evident in a growing enthusiasm for the new funding system. “Many member associations used to complain about the nonscientific approach to their annual budget allocation,” says Paulin Tra, performance and knowledge manager for the Africa region. “Now they support the allocation process, because we are able to promote transparency through the performance-based funding system.” Association leaders who initially doubted the value of data collection also changed their view. Today, Tra says, they are “using evidence to do better.” Anand notes that a similar shift has occurred in his region: “Member associations, at the most senior level, started looking at their data more closely and started questioning their outputs.”

Achievements at the association level show just how significant the performance gains across IPPF have been. Consider RHU, the Ugandan association, which participated both in the pilot of the new funding system and in testing the Branch Performance Tool. After analyzing its service record, RHU dramatically increased the number of mobile clinics that it deploys to remote communities and refugee camps. Since 2009, RHU has tripled its reported level of contraceptive service delivery, and it has eliminated the multiday waiting lines that were once a feature of some of its clinics. The Branch Performance Tool “helps us to focus on efficiency, it helps us to focus on outputs, and it is a motivation to do more,” says Jackson Chekweko, executive director of RHU.

Another association, the Reproductive Health Association of Cambodia (RHAC), responded to IPPF’s new performance culture by initiating several big strategic changes. It closed two low-performing clinics, moved its clinics to locations that are closer to women who need its services, and expanded its service offerings. Partly as a result, the number of services delivered annually by RHAC nearly doubled from 2009 to 2012.

By promoting increased efficiency and by capturing data on all the services they deliver, IPPF and its member associations are better able to demonstrate their effectiveness to funders—and funders have taken note of that change. In October 2012, a London-based consulting and research firm called Social Development Direct issued a report on IPPF for DFID in the United Kingdom. The firm gave the federation high marks, citing “good evidence for cost effectiveness and value for money in specific cases that can be generalised across the Federation.” Other donors, including the US government, have also boosted their funding of IPPF.

“The way we talk about performance has really improved,” Tra notes. Member associations now see that by embracing a performance culture, they can serve more clients and serve those clients better—and the better they serve their clients, the more funding they receive. Today, with added funding and new tools, associations are relocating branches to improve accessibility and to reduce costs, they are conducting community outreach to raise awareness of what they offer, and they are training and hiring staff members so that they can provide additional services.

For Melesse and other IPPF leaders, that outcome demonstrates that it’s possible to manage performance—even within a federated structure. “We needed our member associations to buy into this process, and in order to achieve that fundamental goal, we needed to show we could succeed,” he says. “By any reckoning, this was a substantial culture change, and its effects will be felt for years to come as we work to help many more women and girls.”

Read more stories by Margot Fahnestock, Lee Green & Jason Blau.