(Illustration by Mitch Blunt)

(Illustration by Mitch Blunt)

SPONSORED SUPPLEMENT TO SSIR PRODUCED FOR THE COLLECTIVE IMPACT FORUM

Collective Insights on Collective Impact

This special supplement features the most recent thinking and learning about how to use the collective impact approach to address large-scale social and environmental problems.

-

Essential Mindset Shifts for Collective Impact

-

Defining Quality Collective Impact

-

The Role of Grantmakers in Collective Impact

-

Power Dynamics in Collective Impact

-

Roundtable on Community Engagement and Collective Impact

-

Aligning Collective Impact Initiatives

-

Learning in Action: Evaluating Collective Impact

-

Achieving Collective Impact for Opportunity Youth

-

Making Public Policy Collective Impact Friendly

As leaders across the social sector adopt the collective impact approach to problem solving, an important question looms in many people’s minds: Given how complex and unpredictable the work is, what is the best way to evaluate a collective impact initiative’s progress and success?

Traditionally, evaluations of specific interventions have focused on their results to determine whether or not (and how) they have “worked.” But collective impact initiatives involve multiple activities, programs, and initiatives, all of which operate in mutually reinforcing ways. Moreover, they aim to change highly complex systems. As a result, merely taking a snapshot of a given intervention’s effectiveness at one point does not tell the whole story. To truly evaluate their effectiveness, collective impact leaders need to see the bigger picture—the initiative’s many different parts and the ways they interact and evolve over time. For that, they need a new way to approach evaluation. We believe that effectively evaluating collective impact requires the following practices.

First, rather than attempting to isolate the effects and impact of a single intervention, collective impact partners should assess the progress and impact of the changemaking process as a whole. This process includes the initiative’s context; the quality and effectiveness of the initiative’s structure and operations; the ways in which systems that influence the targeted issue are changing; and the extent of progress toward the initiative’s ultimate goal(s). To be sure, the relative emphasis of evaluation will shift as the collective impact initiative matures. For example, an initial evaluation might assess the strength of the initiative itself, and a subsequent evaluation might focus on the initiative’s influence on targeted systems.

Second, rather than use performance measurement and evaluation to determine success or failure, collective impact partners should use the information they provide to make decisions about adapting and improving their initiative. To that end, collective impact partners should embed evaluation and learning into their initiative’s DNA, rather than treating it as an annual (or quarterly) exercise.

Embracing this comprehensive, adaptive approach to evaluating collective impact requires leaders to do three things differently. As we explain in the sections that follow, they should “ask what,” “ask why,” and “ask often.”

Ask What

First, collective impact partners should assess the progress and effectiveness of the changemaking process as a whole. This exercise requires examining four levels of the initiative: the initiative’s context, the initiative itself, the systems that the initiative targets, and the initiative’s ultimate outcomes.

The initiative’s context | Context refers to everything that influences an initiative’s design, implementation, and effectiveness. It includes economic conditions, demographics, media focus, political will, funding availability, leadership, and culture, among other factors. Changes in context are inevitable and often are important in supporting or hindering an initiative’s success. For example, just as Washington State’s Road Map Project began to form in 2012, its leaders learned that they could apply for a federal Race to the Top district award. They successfully organized themselves and won a $40 million award. The influx of financial support significantly boosted the initiative’s capacity and accelerated the implementation of its priority strategies.1

To see how changes in context can influence an initiative’s outcomes, consider the example of the final evaluation for Shape Up Somerville. This Massachusetts-based collective impact initiative focused on reducing citywide rates of obesity and included an analysis of the city’s changing demographics. As its leaders noted: “If a community becomes more racially diverse over time, as is the case in Somerville, obesity rates would be expected to rise.”2 Without taking into account local demographic changes, the initiative’s collaborators couldn’t fully understand the effectiveness of its efforts.

The initiative itself | For any collective impact initiative, changing the way organizations and individuals interact with each other and approach complex problem-solving is an important, if often implicit, goal. The real power of the collective impact approach lies in the process—the ability to unite diverse groups around a common purpose, encourage open discussion and ongoing communication, support coordination and alignment of activities, and promote learning and continuous improvement. For example, an evaluation of Vibrant Communities, a pan-Canadian antipoverty initiative, found that the “multi-sectoral nature of Vibrant Communities helps government move on [policy] change because proposals are already vetted from multiple interests in the community.”3

Similarly, Shape Up Somerville attributes its success largely to its “multi-level approaches to promote active living and healthy eating.”4 The initiative engaged public schools, city government leaders, academic researchers, civic organizations, community groups, businesses (including restaurants), and residents in an integrated approach to problem solving that facilitated systems-level change. Ultimately, the initiative succeeded in decreasing childhood obesity rates throughout the city of Somerville.5

Assessing the progress and effectiveness of the collective impact changemaking process as a whole requires an explicit focus on the initiative’s design and implementation. (See “Assessing an Initiative’s Design and Implementation” above.) Although collective impact leaders may question the value of evaluating process, we urge them to pay careful attention to the quality and strength of their initiative itself, especially in its early years. This is a time when critically important decisions are made and learning is invaluable.

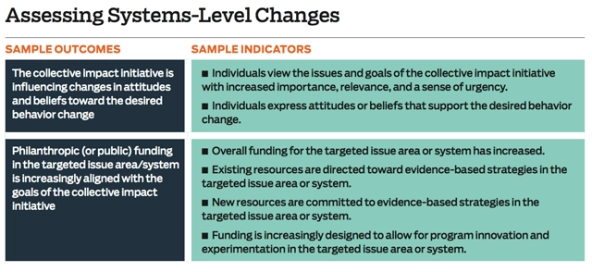

The systems that the initiative targets | Most collective impact initiatives have hugely ambitious goals: Not only do they seek to tackle complex problems, but they also try to create large-scale change. Achieving this level of impact, in a way that’s sustainable over time, requires collective impact initiatives to make significant changes in systems (by influencing cultural norms, public policies, and funding flows) as well as patterns of behavior (including changes in professional practice or changes in individual behavior). These systems-level changes create the conditions that allow collective impact initiatives to achieve their ultimate objectives. (See “Assessing Systems-Level Changes” below.) Shape Up Somerville, for example, attributes part of its success to a constellation of systems-level changes. These included increased funding for anti-obesity work; healthier menu offerings in public schools and at more than 40 local restaurants; new bicycle lanes and improvements to public park infrastructure; improved nutritional standards in schools and other public institutions; and improvements in physical education equipment, facilities, and activities in schools and after-school programs.

The initiative’s ultimate outcomes | As the initiative matures, collective impact partners should keep a watchful eye on their ultimate goals. It is normal for initiatives to make slow or minimal progress toward their goals in the early years, but collective impact partners should expect to achieve meaningful, measurable change within three to four years. They should track this progress over time using the initiative’s shared measurement system in addition to more robust evaluations.

Ask Why

Collective impact partners should use the results of their evaluative activities to make smart decisions about adapting and improving the initiative. To make such decisions, funders must complement performance measurement activities (which focus on determining what is happening) with other types of evaluation aimed at understanding how and why change is happening.

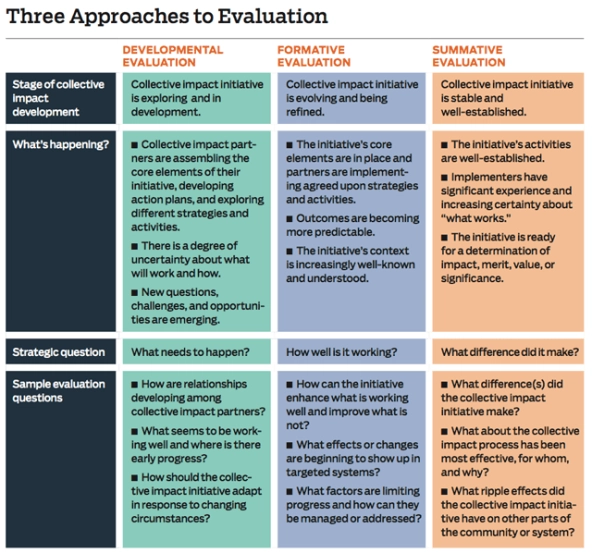

Collective impact partners can employ three different approaches to evaluation at different points in an initiative’s lifetime: developmental evaluation, formative evaluation, and summative evaluation. As “Three Approaches to Evaluation” (below) outlines, each approach can help answer different questions. (For more detail on the three approaches, see “Guide to Evaluating Collective Impact,” available at www.fsg.org.)

These approaches to evaluation are not mutually exclusive. Collective impact partners can and should use a combination of approaches over time. For example, Vibrant Communities in Canada used developmental evaluation to explore changes in context and potential implications for the initiative, and simultaneously used formative evaluation to refine its existing efforts. Later, the initiative used summative evaluation to look back on its effectiveness and overall impact.

Ask Often

In the context of collective impact, the purpose of performance measurement and evaluation is to support learning, and the goal is to enable continuous improvement. We suggest that collective impact partners follow these steps to effective evaluation:

Start early | Even before an initiative’s shared measurement system becomes operational, collective impact partners can monitor a set of early performance indicators that focus on the quality of the initiative’s design and implementation. They can also use elements of developmental evaluation to provide insight into the effectiveness of the initiative’s early efforts. For example, an infant mortality initiative in rural Missouri uses developmental evaluation to better understand how contextual factors and cultural dynamics influence the development of the strategy. The partners are working with a team of evaluation coaches to ask such questions as “What does the problem of infant mortality look like from the perspective of different stakeholders in our region, and what are the implications for the design of our collective impact initiative?”6

Embed learning into the initiative’s DNA |To make learning a regular, active, and applied process, collective impact partners should establish clear learning structures and processes. For example, they can create space for group reflection at the start of meetings or periodically survey participants to identify pressing issues. These processes encourage the partners to exchange information, ideas, and questions and are thus critical to the initiative’s continuous improvement.

Allocate resources appropriately | Because learning is central to collective impact success, ongoing investment in performance measurement and evaluation is crucial. For many collective impact initiatives, ongoing measurement requires dedicating a part-time or full-time employee to organize, oversee, embed, and apply lessons learned across the initiative. For others, it means looking for external support in the form of a coach, technical assistance provider, or professional evaluator. The majority of collective impact initiatives will likely rely on a combination of internal and external evaluation resources at different times. Regardless of the composition of the evaluation team, we urge collective impact partners to plan carefully for the financial resources and personnel they will need to support a robust approach to performance measurement and evaluation. After all, as a recent report from Grantmakers for Effective Organizations put it, “When you look at evaluation as a means of learning for improvement, … investments in evaluation seem worthwhile because they can yield information needed for smarter and faster decisions about what works.”7

Conclusion

Effective collective impact evaluation needs to be multi-faceted, flexible, and adaptive, but it does not need to be exhaustive or extremely expensive. Evaluation efforts come in all shapes and sizes—the scope and scale of any individual evaluation will depend on the time, capacity, and resources available. Moreover, the focus of evaluation (including questions, outcomes, and indicators) will change as the initiative matures. The most effective collective impact initiatives will be those that seamlessly integrate learning and evaluation into their work from the beginning, allow those processes to evolve alongside their initiative, and use them as a guide for the future.

Read more stories by Marcie Parkhurst & Hallie Preskill.